I have to say U-Net is a great neural network structure, especially in medical segmentation. Each year, tons of papers got published based on fix and replacement of some of the modules in the U-Net on various medical problems. My first deep learning project also used U-Net like structure (convolutional autoencoder) for intracranial lumen segmentation.

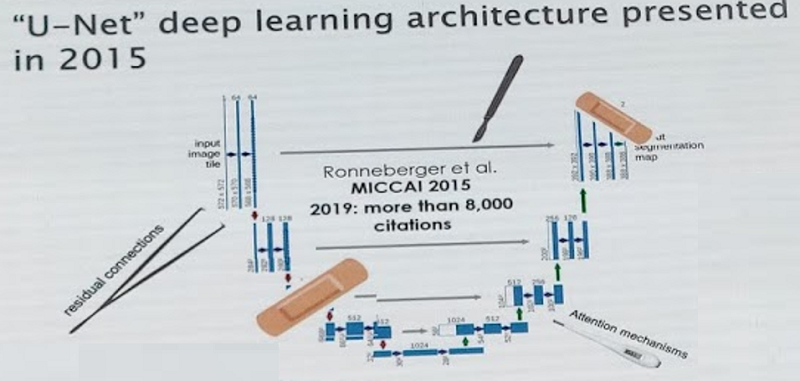

In MICCAI 2019, there is a figure mocking the current "state-of-the-art" U-Net. Looks very interesting.

However, in addition to the publications, what additional values are there for these papers?

It almost becomes a stereotype that as long as the evaluation metrics are showing higher score, the new method is valuable even if it is built on other models with little modifications. Sometimes the improvement is so trivial that even the network trained again the advantage is likely to disappear. In medical area, few people are willing to share data or code, so it makes it very difficult to repeat the experiments, which also means the so called innovations are hard to be validated.

On the medical side to view these papers, there is even less values left. Doctors care about real usage of new techniques. The model claimed to have better performance on one dataset is not likely to have general superior performance on real new datasets. What really matters in medical research is more on whether the task can be solved well or not at all. So it is not surprising to find these U-Net family papers become the jokes in medical people's eye.

Deep learning model design can be very flexible, meaning it is easy to avoid duplication of existing people's method. It also means there are much more interesting things we can try to explore, such as combination of multiple deep learning models to allow automated and understandable processing workflow, better network structure design to make models more adaptable to new datasets, and training models with few manual annotations while maintaining good performance. Maybe the easiest publications using deep learning on open dataset with full annotations on traditional topics have already been published (one example I hate most is retinal vessel segmentation with tons of new papers competing the accuracy to the second digit), but there are certainly still many exciting things for researchers to find out.

So don’t waste time playing with U-Net to improve performance to the extreme, but rather talk with medical people to know the clinical need and technical gap, and develop something remarkable with deep learning which can really benefit the medical research or clinical practice.