As you know, I am currently an Electrical Engineering (EE) student who works in a Radiology lab as a research assistant. Naturally, I am working with people from both the technical and medical worlds by actively developing or applying new techniques to solve medical problems.

If you consider this position carefully, you would find it is an interesting/tough job, as I need to make progress in developing new or better algorithms to meet the EE graduation requirement, and also need to develop something really can be used clinically to explore the unknown medical problems. If you compare with other interdisciplinary settings for students, such as EE students working in EE labs with collaborators delivering medical data, or medical students working in hospitals who are interested in technical developments, it is quite obvious that I have naturally much superior resources. From the Radiology lab I have access to tons of first hand imaging data from multiple clinical studies using our own MRI scanner, multiple reviewers with clinical background are willing to label the medical data for machine learning projects; from EE lab I am paying close attentions to newly emerging techniques through regular EE lab meetings and I can easily discuss technical problems with lab mates who have strong technical backgrounds. The bad side, however, is the pressure from both advisors, attending meetings from both labs, publishing papers with a wide range from technical development to medical applications to clinical research, just like earning two PhD degrees.

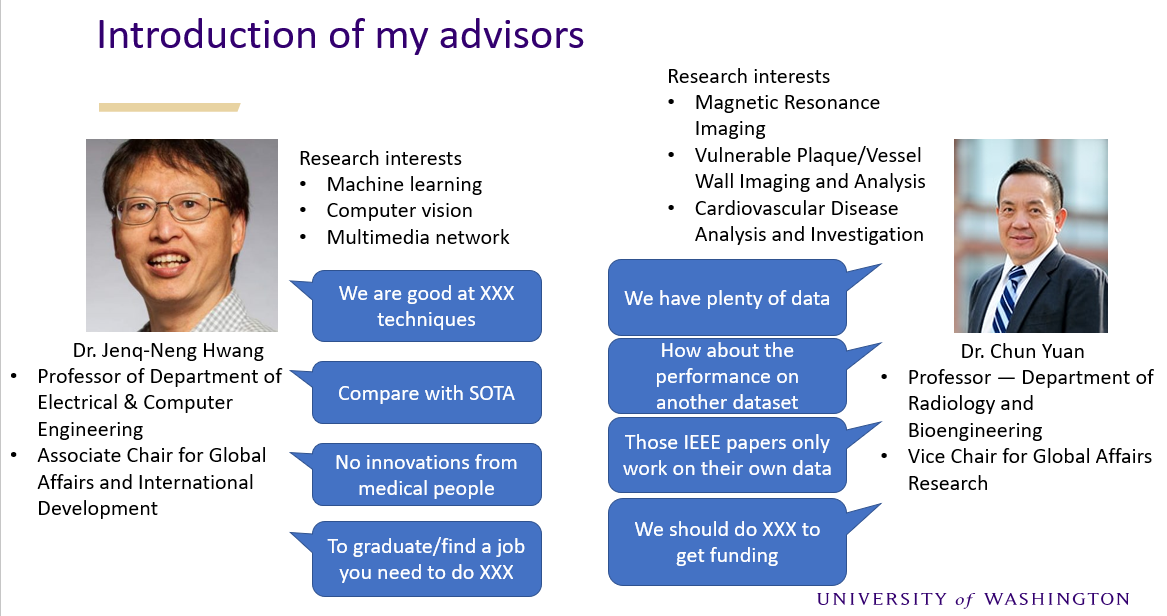

One benefit is by working with people from various backgrounds, I have more comprehensive thinking for interdisciplinary research. During my research, I often hear people from one side of world criticizing the other side. Engineers or technical researchers might argue the medical world lacks spirit for excellence, never continue improving methods whenever they can solve problems. Clinicians or medical researchers might argue most technical developments are merely for papers without practical uses, and the innovations are over-simplifying complicated medical problems.

The good thing is, by knowing what the critics are in both worlds, I can try to avoid those problems to do meaningful research that satisfy both sides. To translate the arguments from both worlds, the desired techniques used to solve medical problems need to be both accurate and feasible. Accuracy means technically strong demonstrated by achieving state of the art in evaluation metrics, feasibility means the method should be general enough for data coming from multiple sites, different conditions, various quality, and if it is possible, the method should be user-friendly or fully-automated. Actually, that is a far more challenging requirement than purely technical development. For publishing technical papers, you only care about whether your fancy method is the best on your specific dataset under your specific condition after your careful fine-tuning, as long as the evaluation metrics show the results are superior than all previous methods, you can get published, no matter whether the method is really applicable with similar performance to other datasets or clinical settings. I personally consider such research as time wasting, but sadly many work like that are still there (for grant/graduation/promotion/whatever) even in top conferences like MICCAI.

I am following a very different approach on technical development of medical topics. My motivation for developing new techniques are problem-oriented instead of publication-oriented, that means, whatever I put effort on is finally designed to process real medical data to solve real medical problems. For example, for tracing cerebral arteries, what I developed (iCafe) should work for all the 163 subjects in our dataset (actually it works for almost all the 1000+ subjects till now). If the method fails for most subjects, I cannot get the meaningful results from the dataset for a decent clinical paper. If the method takes a long time for manual correction or labeling, I have to take the pain to manually work on it myself. If the method has bugs, I have no other people to blame for. From this experience, it suddenly occurred to me the best way to develop a nice tool is to force the developer to use it for processing numerous data. Any user-unfriendly design or adhoc behaviors in the tool/algorithm can be avoided because otherwise the developer will hate himself repeatedly for designing that stupid algorithm. In other words, publishing a technical journal paper should not be the ending point for technical developer, but rather that is the starting point for meaningful clinical research.

From the iCafe experience, I also realize the ultimate solution for medical imaging research is artificial intelligence (AI). Considering the large number of variables in medical projects, only a large number of subjects can ensure the conclusions we made are sound and meaningful. But on the other hand, large number of data can not be analyzed manually or semi-automatedly. The solution is to develop fully automated AI tools for image review. Following that path, I borrowed the object tracking idea from computer vision society and developed FRAPPE, and analyzed the 3.5 million popliteal vessel wall images from the OAI dataset, which opens the door for many interesting studies on popliteal vessel wall relations with cardiovascular disease. Cardiovascular research study on this scale can never be dreamed of without the help of AI.

Another thing benefiting from communicating between both worlds is I am able to discover what is clinically useful when developing new techniques. For example, segmenting organs of interest is probably the most familiar topic for every researcher who claim they are doing medical image processing. I am also developing vessel wall segmentation techniques to extract wall features from large datasets. Instead of chasing for additional 0.01 Dice score on performance improvement, I am more interested in exploring how clinicians can use automatically generated segmentation results. For example, designing uncertainty scores to warn human when the automated segmentation algorithm feels less confident so human should pay attention to them. Another example, instead of expecting human to identify starting point or region for analysis of vessel wall, I developed an automated artery localization model to locate artery before analysis. I am surprised why technical researchers seem all consider the region of interest is given for free.

There is a clear gap between technical and medical world. Some people who are stuck on one side might never see it, some people might be too stubborn to admit it, but I am determined to bridge it. By developing novel techniques that are accurate, feasible and useful, I want to be the bridge between technical and medical world to facilitate more impactful research.